Imagine you’re listening to someone read and then writing down what they say. WER is like counting how many mistakes you make while doing that. It helps you see how good or bad a speech-to-text system is. A low WER means fewer mistakes and better accuracy. If you want to know how WER works and how to make it better, keep going—there’s more to learn that can help you understand the full picture.

Key Takeaways

- WER measures how many mistakes a speech-to-text system makes by comparing what it says to the original speech.

- A low WER (like under 10%) means the system transcribed speech very accurately.

- WER counts errors like missing words, extra words, or wrong words in the transcription.

- It helps us see how well the system is working and where it needs improvement.

- The goal is to get a WER as low as possible for better speech recognition accuracy.

Philips VoiceTracer DVT6115 Music Recorder with Sembly AI Speech-to-Text Software Trial

Three HI-FIDELITY microphones for best-in-class music recording

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What Is WER and Why Does It Matter in Speech Recognition?

Have you ever wondered how accurately speech recognition systems understand what you say? That’s where WER, or Word Error Rate, comes in. WER measures how many mistakes a system makes when converting speech to text, making word importance clear. Every word counts because errors can change the meaning or make the text confusing. Error analysis helps developers see which words or sounds cause the most problems, leading to improvements. WER is essential because it provides a simple way to compare different systems or updates. A low WER means the system recognizes speech accurately, while a high WER indicates more mistakes. Understanding WER helps you grasp how reliable a speech-to-text system is, guiding both users and developers toward better performance and experience. Additionally, quality assurance practices ensure consistent and reliable results in speech recognition technology. To achieve this, robust evaluation methods are used to measure and improve accuracy across diverse speech samples, which is crucial for the development of market-leading speech recognition systems. Moreover, ongoing research into error patterns helps identify common mistakes and refine recognition algorithms for better accuracy.

noise cancelling microphone for speech recognition

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What Do Good and Bad WER Scores Look Like? Real-Life Examples

What do good and bad WER scores actually look like in real-life scenarios? When audio quality is high, and your model training is thorough, you’ll see low WER scores—often below 10%. This means the transcriptions are accurate, with few mistakes. For example, a clear recording of a lecture with well-trained models might have a WER around 5%. On the other hand, poor audio quality, background noise, or inadequate training can lead to high WER scores—above 30% or more—resulting in many errors. You might see this in noisy environments or with unoptimized models. These examples show how vital good audio quality and proper model training are for achieving better WER scores, making your speech-to-text system more reliable and easier to use. Additionally, understanding the contrast ratio of your audio environment can help improve clarity and accuracy. Consistently monitoring your WER metrics can assist in identifying areas for improvement over time.

![Speech‑to‑Text Accuracy Metrics: WER Explained Like You’re 5 6 Dragon Professional 16.0 Speech Dictation and Voice Recognition Software [PC Download]](https://m.media-amazon.com/images/I/41mYWIw3-dL._SL500_.jpg)

Dragon Professional 16.0 Speech Dictation and Voice Recognition Software [PC Download]

Dictate documents 3 times faster than typing with 99% recognition accurancy, right from the first use

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

How Is WER Calculated? A Simple Explanation of the Formula

Understanding how WER is calculated helps you grasp what the numbers really mean. To do this, you compare the transcript to the original speech, counting transcription errors like substitutions, deletions, and insertions. The formula for WER is simple: divide the total number of errors by the total words spoken, then multiply by 100 to get a percentage. For example, if there are 10 errors in a 100-word transcript, the WER is 10%. Scoring thresholds help interpret these results, where lower WER indicates higher accuracy. Keep in mind that some transcription errors are more acceptable than others, depending on the context. This calculation method allows you to objectively measure how well a speech recognition system performs and whether it meets your desired accuracy standards. Understanding error types is essential for improving transcription quality. Additionally, error analysis can help pinpoint specific issues to target for system improvements. Recognizing the role of wave and wind factors can also influence the accuracy of speech-to-text systems, especially in outdoor environments. Moreover, understanding the security of data and how transcription data is protected can be crucial when handling sensitive information.

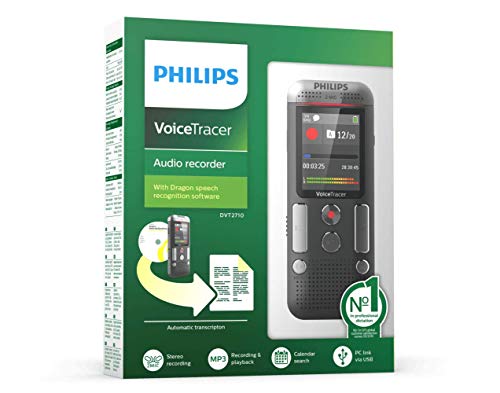

Philips Voice Tracer DVT2710 with Speech Recognition Software

Dragon speech recognition software included

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

How WER Compares to Other Speech Recognition Accuracy Metrics

While WER is the most commonly used metric for evaluating speech recognition accuracy, it’s important to recognize how it compares to other measurements like CER (Character Error Rate), SER (Sentence Error Rate), and accuracy percentages. WER focuses on transcription quality by counting word-level errors, making error analysis straightforward. CER offers a more granular view by measuring errors at the character level, which can be useful for languages with complex scripts. SER assesses how often entire sentences are incorrect, providing insight into overall transcription reliability. Accuracy percentages show the proportion of correct transcriptions but don’t detail specific errors. Understanding different accuracy metrics can help you choose the most appropriate evaluation method for your specific needs, especially when considering system performance across diverse languages and applications.

How to Improve Speech Recognition Accuracy and Lower WER

Improving speech recognition accuracy and lowering WER requires focusing on both the quality of your audio input and the capabilities of your recognition system. To start, implement noise reduction techniques to minimize background sounds that can confuse the system. Clear, crisp audio helps the software better distinguish words. Additionally, invest in speaker training, where the system learns your voice and pronunciation patterns. This customization enhances accuracy, especially for unique accents or speech styles. Regularly updating your system with new data and corrections also helps it adapt over time. By combining effective noise reduction with speaker training, you guarantee the recognition system can perform at its best, reducing errors and making your transcriptions more reliable. Ensuring your setup aligns with smart design principles and trusted resources helps further improve overall recognition performance.

Frequently Asked Questions

How Does WER Impact Everyday Voice Assistant Performance?

You’ll notice that a low WER improves your voice assistant’s performance by reducing misunderstandings caused by language barriers and speech variability. When WER is high, the assistant struggles to understand your commands, leading to errors or frustration. By focusing on reducing WER, developers make sure your voice assistant better recognizes diverse accents and speech patterns, making your experience smoother and more accurate, no matter how you speak.

Can WER Differences Be Caused by Accent or Dialect Variations?

Accents and dialect variations can really throw WER off, like a puzzle with missing pieces. When you speak with an accent influence or dialect variation, the speech-to-text system might struggle to understand you, increasing errors. Different pronunciations, slang, and speech patterns challenge the algorithm’s ability to match your words accurately. So, yes, WER differences often stem from these linguistic quirks, making recognition less precise for diverse speech styles.

Is WER Relevant for Evaluating Real-Time Transcription Services?

Yes, WER is relevant for evaluating real-time transcription services because it helps you understand how accurately the system captures speech. While contextual accuracy matters, WER highlights how noise interference and accents can impact transcription quality. By monitoring WER, you can identify issues caused by background sounds or dialect variations, ensuring the service provides clearer, more reliable results in real-time scenarios.

How Does WER Relate to User Satisfaction With Speech Apps?

You’ll find that a lower WER often means higher user satisfaction, especially when speech apps handle linguistic diversity and context awareness well. For example, users report 30% more satisfaction when apps accurately interpret accents and slang. When transcription aligns with your spoken words, you’re more likely to trust and prefer the app, making WER a key factor in overall experience and how much you enjoy using speech-to-text tools daily.

Are There Industry Standards for Acceptable WER Levels?

You’ll find that industry standards for acceptable WER levels vary, but most aim for accuracy benchmarks below 10%. Companies often set their own thresholds based on user expectations and application needs. While there’s no universal standard, working toward a WER under 10% generally indicates good performance. Achieving this level boosts user satisfaction and helps ensure your speech app remains reliable and effective in real-world use.

Conclusion

Think of WER like a report card for your speech recognition system. Just like how missing a word in a story can change its meaning, a high WER means your system might miss or mess up words. Remember when I first tried dictating a message and it got “hello” as “hallo”? That’s a high WER! Keeping WER low helps your system understand you clearly, making your voice tech as smooth as a well-tuned guitar string.